On Adding Dimensions: A Long-Overdue Conversation With Waking Life’s Tech Wizard, Bob Sabiston

More than a decade before Trioscope Studios produced Netflix’s The Liberator, software engineer Bob Sabiston had already mastered the elusive art of turning three dimensions into two. His ground-breaking program, Rotoshop, was used to create the animated dreamscapes of Richard Linkelater’s critically acclaimed Waking Life and went on to lay the groundwork for his cerebral adaptation of Phillip K. Dick’s A Scanner Darkly. Fourteen years later, Sabiston — now a VR game developer who considers his filmmaking days behind him — reflects on the monumental innovations he has brought to the animation industry, and wonders where the wonderful technologies he helped introduce might take the medium in the future.

A Scanner Darkly

Offscreen: Thanks for being able to talk to me on such a short notice. I’m a huge fan of your work and very excited to talk to you. Let’s get the obvious stuff out of the way first. How much do you know about trioscope?

Bob: Please take any of my comments with a grain of salt, because I don’t really consider myself an expert in this field as far as it is currently. I definitely spent a lot of time in it, but it’s been several years since I actually worked with rotoscoping. I haven’t watched The Liberator as it just came out today, but I watched the trailer and I read a little bit about some of the marketing stuff that I saw online, though I haven’t read anything in detail about their animation process.

Offscreen: Which totally fine as, in a way, that’s sort of what we’re all doing here. The talented people who produced The Liberator made statements here and there, but they have yet to give a detailed explanation of their series works on a technical level. And since we’re all in the dark, a guess from someone with your expertise is certainly valuable. I certainly think so, at least.

Bob: My gut feeling is that it’s some sort of Adobe aftereffects filter, like these apps on your phone that apply a cartoon look to the camera. Judging from the trailer, I didn’t see much of what I would consider hand-drawn, but I could be wrong.

Offscreen: Trioscope Studios, which produced The Liberator, said that their process differs from traditional rotoscoping in that their technology readily masks their live-action footage with that graphic novel look we see on screen.

Bob: During filming?

Offscreen: I am not sure. The producers have said their technique does a lot of the work “prior to the live-action shoot” as opposed to post-production, where most conventional CGI work takes place, so I’m assuming it’s been worked into the cameras one way or another.

Bob: Well, with all these apps which have come out in the past few years that do similar things, it doesn’t surprise me that they’re reaching a point where such an effect can be achieved in real time. It definitely seems like that’s the way things are heading.

A Scanner Darkly

Offscreen: Those apps that you’re referring to, like some Snapchat filters, take live-action footage of physical objects and apply 2D filters onto them — hundreds of millions of people use them every day, but very few know how they actually work. My guess is that the software is trained to recognize specific colors, shapes or features. Am I on the right track?

Bob: I think you are. Again, I wouldn’t be surprised if there was some sort of machine learning aspect to it that identifies elements of the image. What’s tricky is that most phone apps operate on a frame-by-frame basis. If you were to place two frames side by side, they might look the same. But actually seeing the process in motion, there’s a lot of flickering and jittering, because the software doesn’t do anything to maintain cohesiveness across that motion. Compare that to the cross-hatching you see on the faces of the actors on Netflix — they’re kept pretty stable. It’s clearly on another level than what our phones can pull off.

Offscreen: What you just said bears an interesting relationship to Rotoshop, which is the software you yourself developed, and which can be seen in the movies that you’ve worked on. For example, that flickering and jittering, which has been sifted out of The Liberator, features prominently in Waking Life. One of the great visual qualities of that film is that, in between frames, the forms representing the actors constantly change shapes and sizes, but never to the point of losing complete semblance of the subject. It gives the film a sense of liveliness.

Bob: Yes. One of the things that we were excited about when making Waking Life was to play with the tool’s ability to shift those shapes around. Sometimes it would follow the video footage very closely. At other times, it would go off on its own in ways the animator wanted it to do.

Loving Vincent

Offscreen: Even though you are relying on technology to create this effect, the result comes off very painterly, almost like Loving Vincent. That movie wasn’t animated in a traditional sense either. Rather, every couple frames consists of several individual paintings.

Bob: Hand-painted paintings, too; not on a computer.

Offscreen: They created thousands of paintings that, when viewed in quick succession, create the illusion of movement. However, because these paintings were done by hand, and without relying on the exactness of a software — let alone traditional animation techniques — they draw attention to the fact that these are indeed separate images. They may overlap, but never completely flow into one another.

Bob: Yes.

Offscreen: Rather than having artists draw the in-between frames, Rotoshop — and correct me if I’m butchering the mechanics here — takes only the keyframes and uses its processing power to fill in the gaps, creating an effect that’s somewhat similar to Loving Vincent.

Bob: I think that’s the easiest way for a layman to understand it. When you get into the details, it’s a little bit more complicated than that, because the software is not intelligent enough to actually look at an entire keyframe drawn by one artist, compare it to another frame, and then figure out how the two connect. It’s more of an integrated process where the artist draws some brush strokes on one frame before skipping through the timeline to draw those same brush strokes a few frames later. It’s kind of a dumb piece of software, which means that the artist is totally in control of their work, and that’s what makes it look so fluid. It also means that our animation process doesn’t work how you think it does. Normally, you draw an entire frame and then you move forward. When you’re working with Rotoshop, you might focus on, say, the eyebrows, and go through the whole scene drawing those. Then you go back from the beginning and you draw the eyeballs. You build up the whole scene element by element, through time.

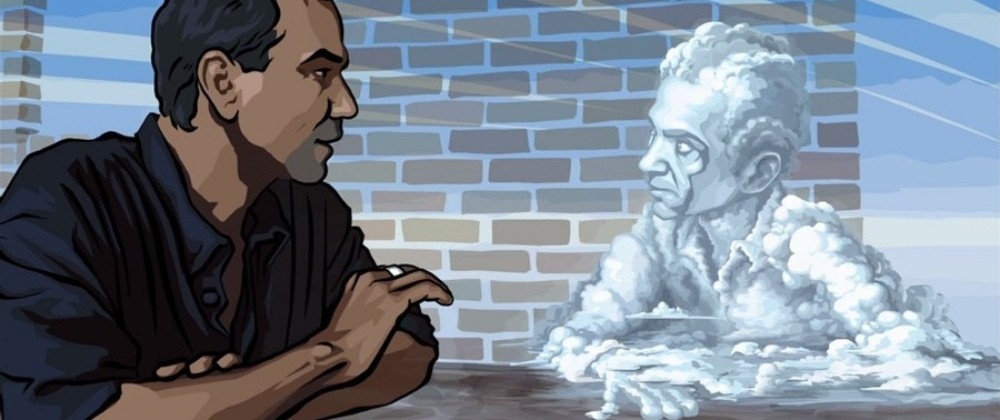

Waking Life

Offscreen: So is that where compositing comes in? Isolating different elements within a complex picture, so that it becomes easier for the artists or the software to work with?

Bob: Not really. Everything you see in the animation we do is either hand-drawn, or a direct link between shapes. We generally don’t use any kind of compositing. There may have been a specific case for some of the buildings in A Scanner Darkly, where they used 3D models that had to be merged with the video background to make them part of the scene. So, unless the scene involves a cartoon on a live action background, there’s no compositing.

Offscreen: A few years ago, you did an interview with “The Dissolve” about your experience on the production of A Scanner Darkly. In it, you mentioned that your goal for the film was to apply an upgraded version of the software you used for Waking Life, one that would be able to interpolate finer details. You also talk about the fear or danger of the final product looking like a filter. Are these topics related?

Bob: It’s something that’s been present from the very beginning, even when the stuff we were doing was so crude and primitive that nobody in their right mind would think it was a filter. As we went further into our projects, the trend was to make things more and more realistic, and as things get more realistic, it does start to resemble a filter. This process continues until you wonder why you’re even animating this since it just looks like a photograph. It’s kind of a double-edged sword. It can look very cool, but it also takes a lot more work, and you risk losing some of the craziness as well as the individual stamp of the artist. I guess it depends on the demands of the project. I don’t think A Scanner Darkly would have worked very well if it had changed styles, but it works for Waking Life.

Offscreen: And one of the reasons it works so well in Waking Life is, I think, that form follows function. The narrative of that film is segmented, made up of separate, seemingly unrelated conversations, and bouncing back and forth between more realistic and more exaggerated art styles reflects that structure.

Bob: You want your stylistic choices to match the subject matter. The other thing I will say about the whole photographic-realism thing is that it’s very easy for people to tell when it’s just a filter, no matter how good it looks. When an artist has really labored over something, and they have a talent for drawing — it just shows, you know. So far, it’s not something that AI can mimic…yet. I’m not saying it won’t get there, because I’ve been pretty impressed — astounded, really — by all the leaps and bounds that it has made in recent years.

Offscreen: Some of the scenes from Waking Life that resonated with me the most emotionally were the ones that were the least realistic. The opening scene, for instance, follows a boy whose eyes are drawn as if they are detached from his body; they’re enlarged and arranged at odd angles. Creative liberties like that are found all throughout Waking Life, but they’re much harder to find in A Scanner Darkly, and they’re almost non-existent in The Liberator. Trioscope in particular has been promoted as enhancing the performances of the actors, but I personally think that going the photorealistic route doesn’t necessarily help much in this regard.

Waking Life

Bob: That’s the problem. If you’re gonna make an animated movie, you have to care about the animation. The project is the animation, you know what I mean? The subject matter is obviously important, but the animation has got to be at least as important. The visual style can’t be just a layer that goes on top of what you’re doing — it has to be the thing you’re making. I mean, as an animator, is your goal to make animation, or to put frosting on your movie? We’ve been approached so many times by companies asking to run their work through our processor to help it look better. It’s not the right approach, in my opinion. A lot of these productions also don’t have a huge budget, which is another prerequisite for good animation.

Offscreen: And that is something you’ve mentioned before when talking about your experience on A Scanner Darkly as well, right?

Bob: With A Scanner Darkly, the team was trying to make what we thought would be a fantastic-looking movie, but they had not budgeted the right amount that it was going to take. This was unfortunate, because that amount was only about 4 million, maybe 8 million dollars which, for a feature starring Keanu Reeves and all these other stars is like, really? You can’t set aside 8 million?

Offscreen: Speaking of budgets, that’s another field in which these reality-augmenting technologies stand out. Whenever huge, live-action productions want to have a big set piece, like a tank or a dragon or whatever, their best bet is to bring in CGI. However, because these animated objects interact with real humans and environments, the viewers will be able to notice even the tiniest flaws in their designs. However, if you use something like trioscope, which brings every object in the film to the same level, so to speak, these flaws become easier to mask, meaning the final product will likely end up looking a lot better.

Bob: I think you’re absolutely right. That’s where this technique really shines. By having the footage you shot put into this process that makes everything look the same, you can do things you wouldn’t be able to do with standard resources. It has a lot of potential for lower budget projects that otherwise may not be able to depict an event like World War Two in a way that feels believable.

Offscreen: Forgive me in advance if what I am about to propose comes across as sacrilegious from your standpoint, but could you ever imagine a kind of hybrid between Rotoshop and Trioscope — a software that optimizes CGI, while retaining the humanity and artistic sensibility you get in your movies?

Bob: Sure. There’s probably a number of ways that you could combine these processes. You could probably use a filter on backgrounds and vehicles, for instance, and then use more of a hand-drawn approach on your lead characters.

Offscreen: In past interviews, you’ve talked briefly about the struggle of finding artists whose style works well with the way in which Rotoshop works. Do you actually think there are certain styles that suit the software better than others?

Bob: Personally, I always thought that you didn’t necessarily need someone with great animation skills, because the motion part is mostly provided by Rotoshop, right? Instead, what you are looking for is someone who just has a very good aesthetic sense, like painters and illustrators. When I first got into rotoscoping, I loved the idea of making projects where every artist had their own style, and all of our independent work has been done that way. But I do understand that that’s not viable for a lot of commercial projects.

Offscreen: To me, that’s the most exciting thing about Rotoshop. So many visually-inclined people are prevented from entering the animation industry because drawing motion isn’t their strongest suit. By doing that for them, the software opens up a world of opportunity to types of artists who, up until this point in entertainment history, have been forced to stick to static mediums.

Bob: That’s a good point. It does give visual artists a means to express themselves in ways that they might not have thought they could do before. I’m usually very careful not to claim that Rotoshop’s the same thing as animation, though, because it’s not. I’ve done traditional animation, and I’m not very great at it, but I really respect that craft.

Offscreen: Given that A Scanner Darkly came out almost a decade and a half ago, a lot of people are wondering: how has the Rotoshop software evolved since then?

Bob: I got out of animation ten years ago. I was fed up with the low budgets and commercial projects. The software did change a little bit after A Scanner Darkly, but it hasn’t changed in recent years, and in fact you can’t even run it on current generation McIntosh anymore; you have to use an emulator. I’m working with VR now. I spent over ten years doing nothing but rotoscoping, and while every bit of it was enjoyable, I’m happy to do something else for a while.

Offscreen: Well, I don’t doubt you will end up bringing the same levels of innovation to the video game world that you brought to the animation. That said, if you ever do decide you want to return to animation, I’ve always thought you could pull off a great collaboration with Ralph Bakshi. Just think about it — both of you guys know the industry inside and out, both of you got sick of it, and both of you found new passions. He’s working as a painter now, I think, so that may just make him a perfect fit for Rotoshop.

Bob: Bakshi is a legend, although I should mention that his rotoscoping from the seventies probably isn’t what influenced me. My journey was more about finding a faster way to work.