Projections: The Development and Politics of Digital Media in Audiovisual Performance

Audiovisual Integration Beyond the Cinema

Editor’s Intro:

This essay serves as the point of transition between the two general sections of this edition of Offscreen: “Sound in the Cinema and Beyond.” The first section having been comprised of two forums and three essays addressing various aspects of audiovisual integration in the cinema, Mitchell Akiyama’s piece reaches into the realm of sound conscious filmmaking and then leads us out into the world beyond its borders, a world where artists strive to find new models for the joining of image and sound. In so doing, Akiyama takes us on a journey through the world of live audiovisual performance, from its historical beginnings through to its modern day implementations in the world of laptop concerts. Much more than a valuable history lesson, Akiyama strives for an assessment of the issues that artists have long been struggling with –in the cinema and beyond- when trying to find more organic solutions to the joining of sound and image. Along the way he engages with questions surrounding the relationship between performance and recorded material, synaesthetic experience and its potential exploration by new media, and the work of numerous contemporary audiovisual performers in search of the state of the art: a new audiovisual language. -RJ

__________

While music is often hailed as the harbinger of change, a privileged force at the vanguard of artistic invention, its performed incarnation has by comparison tended to be staunchly conservative. 1 Music, in the Western tradition, is performed for (we could even say at) an audience. The first European concert halls, built in the 18th century, placed the performer centre-stage with the audience fanned around that point at 180°. It is a convention that has secured and reproduced the cult of the star, the virtuoso. 2 Even in contemporary music forms – rock, hip-hop, etc. – the star stands centre-stage. Western audiences are used to watching performers use their voices or hit, pluck, scrape, and blow on objects that make sound. These actions are codified, conventional, and, as such, musical. Of course, the edges of this picture have occasionally been softened. Compositions within the acousmatic tradition of electroacoustic music, for example, are “diffused” with a mixing console located behind the audience. This is a notable departure from the visual focus of the traditional music performance, one that attempts to shift the location of the spectacle to the aural. 3 In the dance club the DJ, like the acousmatic diffusion artist, was usually removed from the gaze of the audience. Located in a booth at the edge of the dance floor, the disco DJ “eroded fixed definitions of performance, performer and audience” (Toop 1995:41). However, DJs rapidly took on star status and became objects of visual attention. 4 By the mid-1990’s the DJ had been reborn as the “turntablist.” The scratching techniques that had been pioneered by DJ’s Kool Herc and Grandmaster Flash had been refined to the point where a DJ was no longer mixing existing tracks, but creating new music from recontextualized fragments. Almost simultaneously concerts showcasing turntablists began featuring projection screens showing close-ups of turntables, mixers, and hands, a development that re-emphasized the visual aspect of such performances.

Display Music

In the late 1990’s the laptop computer made its on-stage debut as a musical instrument. Despite the broad range of schools and styles offered by a new generation of performers, the laptop concert quickly became entangled in the artist-centred performance schema. Kim Cascone writes, “the laptop musician often falls into the trap of adopting the codes used in pop music – locating the aura in the spectacle. Since many of the current musicians have come to electronic music through their involvement in the spectacle-oriented sub-cultures of DJ and dance music, the codes are transferred to serve as a safe and familiar framework in which to operate” (Cascone 2002:56). It has become clear in recent years that audiences weaned on guitar licks, the gesticulations of conductors, and the causal tautness of the turntablist’s scratch are “increasingly bored stiff by the sight of the auteur sitting onstage, illuminated by a dull blue glow, staring blankly at an invisible point somewhere deep in the screen. In this situation, for rock-educated audiences facing the stage expectantly, a twitch of the wrist becomes a moment of high drama” (Sherburne 2002:70). As a result, some variety of video projection has become almost de rigueur at laptop concerts, a pseudo-apology for the lack of providing a visual cause for an aural effect.

Kim Cascone Performing Live

The inclusion of visuals has taken several forms. In its most transparent form the live projection has appeared as a direct lineout from the laptop, mirroring for the audience the interface with which the musician is working. While this transparency might shed some light on the performer’s process, it doesn’t necessarily make for an entertaining experience. Far from creating a contact with the sound and the body responsible for managing it, the sight of a cursor caressing a knob made of pixels enhances an alienating techno-fetishism that can deaden the experience. These situations usually seem to allude more to product demos or technique clinics than the concert experience that audiences often find themselves missing.

Digital Formalism

Signal Performing Live

Another approach to the integration of sound and projection that emerged in the late 1990’s was an ultra-minimal, formalist attempt to create visualizations of a music that was correspondingly stripped-down and attentive to form. Granular Synthesis, Ryoji Ikeda, Pan Sonic, and artists recording for the German Raster-Noton label (including Carsten Nicolai, Frank Bretschneider, and Olaf Bender – together known as Signal) gave (and continue to give) synaesthetic performances in which the most basic of sound information – sine tones, bursts of white noise, and digital “clicks and cuts” – was translated to the screen in an equally pared-down visual language. This language often came in the form of geometric patterns whose configurations would change in direct relation to the music, or in the case of Pan Sonic, an oscilloscope that registered the group’s extreme sonic output. While these artists’ sound works have tended to be predicated on rendering data sensible, on the sonorisation of machines turned inside out – of processes made audible – the accompanying visual work has remained staunchly rooted in a “visual music” tradition whose first stirrings can be traced at least as far back as the first attempts to integrate music and colour.

Digression: “Visual Music”

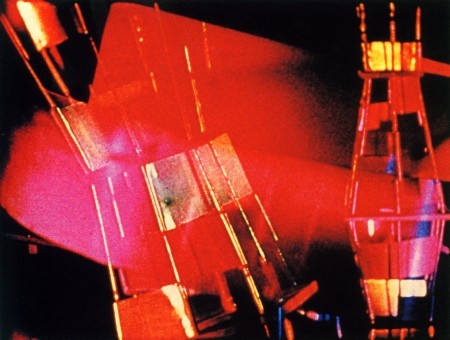

Still from Hy Hirsh’s Gyromorphosis (1956)

In 1734 the Jesuit priest and mathematician, Louis Bertrand Castel, built what is generally regarded as the first colour organ. The instrument, a modified harpsichord, employed a series of levers and pulleys attached to the keyboard to lift screens behind panes of coloured glass that were lit from behind. “Castel intended his instrument, originally operated with prisms, to demonstrate experimentally his systematic correlation of colors with notes in the musical scale” (Zilsczer 2005:70). Perhaps the best-known early sound/colour work, the Russian composer Alexander Scriabin’s symphony, Prometheus: The Poem of Fire, premiered in 1915 and featured coloured light projections on a screen. Scriabin, a well-known synaesthete, had arrived intuitively at the colour/pitch correspondences that were used in the piece, relationships that he considered to be self-evident (Mattis 2005:219). As cinematic projections became ubiquitous the locus of experimentation with light and music shifted. In the 1930’s the German animator Oskar Fischinger began his first experiments in fusing sound with image. By drawing on and manipulating the soundtrack portion of the filmstock, Fischinger developed his “Sounding Ornaments” and began to categorize the quality of sound that certain visual patterns produced.

Reprise: Digital Formalism

If colour was the formal basis for the relationship between sound and image at the beginning of the 20th century, its dominance might have been overturned by rhythm at its end. The coupling of pitch and colour might have lost its potency as the hegemony of the Western tonal system was given a century long shake-up by the Dadaists, the Futurists, Musique Concrète, and, of course, John Cage. It seems that in this onslaught colour might have lost the ground on which it stood. This isn’t to suggest that a generation of artists have gone colour blind. Rather, in certain circles the cuts and taut synchronization of the aural and visual have been tethered to the influence of club culture and beat-driven music rather than Enlightenment physics and the classical canon. As well, this fusion has been propagated under the presumption that “in digital media…music and visual art are truly united, not only by the experiencing subject, the listener/viewer, but by the artist. They are created out of the same stuff, bits of electronic information, infinitely interchangeable” (Strick 2005:20). Artists like Carsten Nicolai, aka Alva Noto, have founded practices on rendering that stuff, those bits, perceptible. Nicolai’s sound work employs a sonic palette that alludes to the raw materials of digital sound – sine tones, white noise, and the sound glitches that are by-products of edits, cuts, and computer errors. The accompanying visuals for Nicolai’s concerts generally consist of a series of simple forms – rectangles, ellipses, etc. – that move in time with the rhythmic cadence of the music. Their reaction to certain accents – bass throbs, clicks, and pulses – suggest that they are of the same stuff as the sound. This assignment is arbitrary, but there is nevertheless an illusion of having a sensory immersion in the internal processes of a computer. Nicolai describes his work as being “based on mechanical ideas,” and as such, he tries to “avoid artistic mystification.” He maintains, “the mechanism of [his] work is clear enough for you to follow their (sic) logic. Of course, there is an emotional sensibility to it, but that comes after” (Nicolai 2006). In Nicolai’s work the correspondence is not between a colour and a given pitch (in fact, the melodic content is subtle, if not atonal to most ears), but rooted in rhythm and volume.

Coming in from the Cold: The Audiovisual Drift

Fennesz Performing Live

While the Digital Formalist aesthetic and approach to digital audiovisual relations is far from defunct, in recent years there has been a minor revolt against what many consider to be its unemotional or clinical stage incarnation. As the laptop has embedded itself in milieus outside of the sphere of purist electronic music, electronic musicians once considered purist have adopted “traditional” instruments both in the studio and on the stage. Perhaps the most visible of this wave is the Austrian laptop musician Christian Fennesz. Although Fennesz doesn’t always appear onstage with a guitar (the instrument that has become an almost ubiquitous sound-source in electronic music), there is no mistaking the instrument’s presence, despite the patina of digital processing that usually envelopes it. Fennesz’s performances are often accompanied by Jon Wozencroft’s video projections of rippling water, swaying trees, and other seemingly mundane sights. What is immediately apparent is that there is no essential synchronization between sound and image. Fennesz’s real-time manipulations of sound files aren’t anchored to any fixed timeline and, as such, every performance will yield a slightly different audiovisual combination. What is implicit with this form of performance is that the audience is responsible for discerning relations between sound and image. In this case the performer, whose back is almost always to the screen, isn’t sonically interpreting the image so much as creating a set of alleatory conditions under which relationships can be established.

Digression: Counterpoint

While the practice of sound performance accompanied by projections is not necessarily what Sergei Eisentstein had in mind when he laid out his call for the “contrapuntal use of sound” in cinema (Eisenstein 1949:258), such combination of sound and image in live performance is related to his essential philosophy of asynchronization. The reality of projections in sound performance is that their content rarely directly represents what is happening simultaneously on the level of sound. That is to say that there is usually some form of metaphor or translation at play. Excepting the example of the contents of the performer’s screen being made visible to the audience mentioned above, or perhaps a real-time image of the performer being projected, what appears onscreen is an illustration of the sound rather than a depiction of its source. This type of audiovisual tautology is usually reserved for stadium concerts at which spectators in the cheap seats are compensated for having to squint at tiny mobile dots on a distant stage or for DJ gigs that have come to resemble clinics. Michel Chion describes the contrapuntal use of sound and image as being horizontal – as opposed to the convention of verticality in cinema. For Chion, verticality, or harmony, obviates the separation of a sound/image assemblage into an image and a soundtrack. “The sounds of a film, taken separately from the image, do not make for an internally coherent entity on equal footing with the image track” (Chion 1994:40). In film we’ve come to be acclimated to the appearance of sounds matching up with their sources – voices, footsteps, etc. These relationships form a series of audiovisual pairings that move through time like dance partners. Chion’s primary example of a typical horizontal scenario in film is the music video, “whose parallel image and sound tracks often have no precise relation – also exhibit a vigorous perceptual solidarity, marked by points of synchronization that occur throughout” (37). 5

Reprise: The Audiovisual Drift

It should come as no surprise that in a laptop “concert,” projections are an adjunct to sound. They are a supplement intended to engage wandering eyes, to keep them focused on the stage, captivating them in the vicinity of the performer without asking for excessive performativity. As a result, qualities that might detract from a deep listening experience – narrativity or an overabundance of signification, distracting movement, etc. – are kept to a minimum. The Portuguese sound/video artist, Rafael Toral, describes his live projections as a visual complement to the music. For Toral, “it’s also a way to offer the audience another thing they can turn their attention to if they like. I don’t think I’m very interesting to be looked at while I’m performing” (Toral 2006). For idealists that have seen the fusion of sound and image as having “unparalleled potential for emotional and intellectual discourse and poetic expression” (Youngblood 1999:49), this might come as a bit of a letdown. The idealist in question here, Expanded Cinema author Gene Youngblood, envisions a medium that “would constitute an organic fusion of image and sound into a single unity, created by a single artist who writes and performs the music as well as conceiving and executing the images that are inseparable from it” (49). As long as laptop concerts continue to be presented under conditions that simultaneously evoke the cinematic milieu and the proscenium stage, it seems as though this fusion is an impossibility.

The Visualaudio Drift

Occupying a more rarefied position on the other side of the performative continuum are artists that put projection before sound. Although the tag “VJ” has been adopted by many in this community, it is a term that has also been used disdainfully from outside its boundaries. The tag, in mirroring the “magpie” practice of DJ’ing, could be understood to imply an ethic of borrowing, of recombination and mixing of works lifted from popular media, cinema, or just about any other source that could be fed through a VCR or digitized. Early VJ projects did just this, sampling everything from the nightly news and Disney cartoons to Kung-fu movies and breakdancers. This practice arguably reached its popular apogee in the mid-to-late ‘90’s with the rise of A/V groups such as Emergency Broadcast Network, The Light Surgeons, and Hexstatic. The most notable exponent has been Coldcut, a British duo, that has also been well known since the late 1980’s for their DJ mixes, genre-bending electronic music, and remixes of hip-hop tracks. Their self-designed VJamm software (bundled with their 1999 album Let Us Replay) inspired a generation of novices, weaned on MTV from birth, to consider sound and image as inseparable. What set Coldcut’s software – and consequently their performances – apart from their peers was the taut synchronization of the video clip’s soundtrack with the accompanying sound. A typical Coldcut piece might include Bruce Lee’s fist connecting with an enemy’s face, providing a percussive snap on a downbeat while the theme from Disney’s The Jungle Book lumbers along underneath. At the time of VJamm’s release laptops barely had enough power to play back video clips, let alone process them with digital effects to any substantial degree. The technique of early digital VJ’ing called for the harnessing of familiar audio/visual signifiers, usually in an attempt to recuperate nostalgic clichés. The projections caused audiences fluent in Hip Hop, ‘70’s and ‘80’s television, skateboard culture, and techno to coalesce amidst a matrix of rhythm and sound, the visual cues acting as cultural touch points.

Coldcut Performing Live

As computing power has ramped up along the curve of Moore’s law, 6 As well, the drop in cost of digital video cameras has made it more feasible for artists to generate their own content, a leap that has changed the VJ’s relationship to popular culture and sampling. In some cases these artists have received equal or even top billing at electronic arts festivals and events. 7 It seems as though the ability to alter a source – especially one that hasn’t been borrowed from another source – rather than simply decontextualize a sample has been fundamental in contributing to live video’s “legitimacy,” a trend evidenced with inclusion in established media arts festivals, and museums. But despite live video’s increasingly “polished” profile it still tends to follow music’s lead, tagging along like a younger sibling.

Audiovision

At this point, with the growing computer power available to artists, the software interfaces that have kept sound artists relegated to making electronic music and visual artists to video are beginning to merge. Jitter, the aforementioned Max/MSP extension, is a part of a software bundle that had been limited to processing audio and MIDI information. In its integrated incarnation, Max/MSP/Jitter is a modular programming environment that allows users to construct “patches” that process signals – MIDI, audio, or video. Having the ability to process all three types of information in one interface means that the plastic differences between the media, at least at a signal level, are eroded – sound can be used to modulate and effect video and vice versa. An early and noteworthy example of this erosion is Joshua “Kit” Clayton and Sue Costabile’s 2002 performance, “Interruption.”[[See: http://www.elektrafestival.ca/video/fast_sue_costabile.html The duo, concealed under white gauzy shrouds, control a flow of images that are interrupted, modulated, and altered by data captured by microphones and video cameras. The locus of the performance is ambiguous – there is an implied visual spectacle in that both performers are in front of the audience but both are hidden. Their faces are occasionally projected on the screen, situating their presence both in the physical, embodied, but implied forms hidden under the fabric, as well as on the screen in an incorporeal, transported state.

Sue Costible Performing Live

While Clayton and Costabile’s work might not be the realization of the “organic fusion” of sound and image anticipated by Youngblood, it does offer a solution to the problem of their integration, of their being treated as the same stuff. And perhaps the possibility of performers using an integrated interface for sound and video might help them drop some of the baggage associated with the various clichés or defaults in their disciplines as they go about creating a new audiovisual language.

Sources Cited:

Cascone, Kim. (2002). Laptop Music – Counterfeiting Aura in the Age of Infinite Reptition. Parachute. 107, 52-58.

Chion, Michel. (1994). Audio-Vision: Sound on Screen, trans. Claudia Gorbman. New York: Columbia University Press.

Eisentstein, Sergei. (1949). Film Form: Essays in Film Theory. Trans. Jay Leyda. New York: Harcourt Brace Jovanovich.

Mattis, Olivia. (2005). Scriabin to Gershwin: Color Music from a Musical Perspective. Visual Music: Synaesthesia in Art and Music Since 1900. New York: Thames & Hudson, 210-227.

Nicolai, Carsten. (2006, January). Quoted on the Avanto Festival’s website. http://www.avantofestival.com/2005/main.php?ln=en&sub=4

Sherburne, Philip. (2006). Sound Art/Sound Bodies. Parachute, 107, 68-79.

Strick, Jeremy. (2005). Visual Music. Visual Music: Synaesthesia in Art and Music Since 1900. New York: Thames & Hudson, 15-20.

Toral, Rafael. (2006, January). www.rafaeltoral.net. http://www.rafaeltoral.net/05_press/interviews_by_subject/works.html

Youngblood, Gene. (1999). A Medium Matures: Video and the Cinematic Enterprise (1984). Ars Electronica: Facing the Future, ed. Timothy Druckrey. Cambridge: MIT Press, 49.

Zilsczer, Judith. (2005). Music for the Eyes: Abstract Painting and Light Art. Visual Music: Synaesthesia in Art and Music Since 1900. New York: Thames & Hudson, 24-86.

PDF Downloads

- Projections: The Development and Politics of Digital Media in Audiovisual Performance by Mitchell Akiyama

- Jacques Attali claims that shifts in the economy of music have anticipated corresponding shifts in the larger political economy throughout European history: “Music is prophecy. Its styles and economic organization are ahead of the rest of society because it explores, much faster than material reality can, the entire range of possibilities of a given code… For this reason musicians, even when officially recognized, are dangerous, disturbing, and subversive.” Jacques Attali. Noise: The Political Economy of Music. Minneapolis: University of Minnesota Press, 1985. P. 11. ↩

- See Attali on Liszt and “The Genealogy of the Classical Interpreter.” Noise, PP. 68-77. ↩

- “One who has not experienced in the dark the sensation of hearing points of infinite distance, trajectories and waves, sudden whispers, so near, moving sound matter, in relief and in color, cannot imagine the invisible spectacle for the ears. Imagination gives wings to intangible sound. Acousmatic art is the art of mental representations triggered by sound. Francis Dhomont. “Acousmatic Update.” 1996. Contact! 8.2 (Spring 1995). http://cec.concordia.ca/contact/contact82Dhom.html But, however enduring this acousmatic model of “performance” has been, its reach hasn’t extended much farther than the soundproofed walls of the academy. While many strains of electroacoustic music are still diffused in this way,[[One of the leading electroacoustic festivals, based in Montreal, is called “Rien à Voir,” or “Nothing to See.” http://www.rien.qc.ca the performance of electronic music jumped several rails from its academic track with the advent of the DJ and club culture in the 1970’s.[[While other forms of music had incorporated electronic instruments, – jazz fusion and progressive rock, to name a couple – these hybrids have been limited to impersonations of or extensions on existing instruments – keyboards, electronic drums, etc. As such, there isn’t any appreciable difference in these forms’ performance. ↩

- At Sanctuary, a converted church and one of the renowned 1970’s New York disco clubs, the DJ booth was set where the alter would have once been. ↩

- This analysis, however intriguing, unfortunately comes up short in that many, if not most, music videos (especially of the “pop” variety) present musicians strumming guitars, beating drums, or belting out vocals – usually in locales either impossible for or inhospitable to performance – in an appearance of synchronization. The fact that the musicians are actually being played by the music on a set or on location poses another set of problems. ↩

- Gordon Moore made the famous statement in 1965 that the number of transistors on a microchip should continue to double roughly every two years. http://download.intel.com/research/silicon/moorespaper.pdf the promise that sophisticated modular software such as NATO, and later Jitter, offered is finally being realized. This development in hardware and software had led to a shift where the primary concern for live video to signify is replaced by an exploration of its plastic properties. More recently, artists like the now-defunct ensemble 242.pilots, Solu, Tina Frank, and D-Fuse, have shown that a signal is a signal – that video can be delayed, distorted, pulled, and phased just like audio.[[It should also be noted that both NATO and Jitter were developed as add-ons to the Max/MSP software whose sound manipulation capabilities were already much more advanced. In terms of tools and technique, video is now being treated in a way that is idiomatically similar to, if not derived from, real-time audio processing. ↩

- Although, at many ostensibly audiovisual or VJ festivals, sound artists are still listed before the video artists or VJ’s that will accompany them. See: http://cimatics.com/, http://www.mappingfestival.com/, http://www.projekttor.org/, etc. ↩

Notes